Science-relevant things that make me smile. This is primarily aimed at resources and fascinating things for undergraduate chemistry majors. Contact chemista[at]live[dot]com with questions/comments.

College Grads and Jobs

Many people enroll in college thinking a degree will make them instantly employable. This is obviously not true.

Take a glimpse at any page of the Occupy Wall Street gallery and you're sure to find numerous examples of people who built up ruinous debt but can't find work. The Great Recession certainly hasn't helped in the US, but this is a problem universities have been talking about for decades.

Take China, for example. China has survived the global economic crisis virtually unscathed. In 2010, their economy grew by 10%. Yet ~30% of their recent college graduates don't find jobs, and thousands of those employed live in highly educated slums. China recent announced a new strategy for dealing with this problem. They are going to eliminate college majors with low employment rates. We don't know which majors yet but in a manufacturing-based economy, finance, management and statistics will probably fare better than poetry, history, or theoretical math.

As a liberal arts professor, I'm ambivalent about this. Part of me wants to say that higher education is (and should be) idealistic, with the goal of producing citizens who will think in new ways and be prepared for challenges undreamt. And part of me realizes that encouraging students to take on $160,000 in debt to prepare for a career in social work (salary ~$30 k/year) is not demonstrating good critical thinking. And the fact of the matter is that some major always has the be the easiest, and a huge number of college students chose majors based on least resistance rather than aptitude or passion. Eliminating a major won't help these people find jobs. Instead, it will be more useful to provide students with means to demonstrate skills employers want, facilitate internships and co-op opportunities, and require a strong work ethic in every class.

Bad science, sweet results

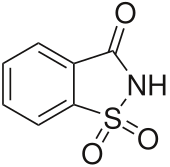

Constantin Fahlberg was a really lucky chemist. One day in 1878, he left his lab and went to directly dinner without washing his hands and discovered that his dinner was really sweet, including his napkin. In his words

Constantin Fahlberg was a really lucky chemist. One day in 1878, he left his lab and went to directly dinner without washing his hands and discovered that his dinner was really sweet, including his napkin. In his words"It flashed on me that I was the cause of the singular universal sweetness, and I accordingly tasted the end of my thumb, and found it surpassed any confectionery I had ever eaten. I saw the whole thing at once. I had discovered some coal tar substance which out-sugared sugar. I dropped my dinner, and ran back to the laboratory. There, in my excitement, I tasted the contents of every beaker and evaporating dish on the table."

Poor lab hygiene and reckless endangerment led to the discovery of saccharin, and a company that made a fortune putting the sweetener into sodas without telling anyone. When public outcry over food quality resulted in regulation, Teddy Roosevelt kept saccharin on the approved list because of his own dieting experience with the substance. It took almost 100 years before science decided that he has right, there is no indication that saccharine is unsafe. In light of obesity and diabetes rates today, it's ironic to read about how NOT having sugar in food was thought to be unhealthy. Maybe 100 years from now, someone will think that subsidizing pizza and fries in school lunches was actually reasonable.

Impact Factors

In 1955, Eugene Garfield first proposed the idea of an index to measure how often a journal's articles were cited. This was before the internet (you knew that, right?) and he was looking for an easy way to sort information. The goal was to distinguish the small-but-often-cited journals from the small-and-not-very-useful journals.

Journal Citation Reports (JCR) publishes the list of impact factors each year. These are the ones journals brag about, the ones that are listed in the journal (or on the "About this Journal" section, if you read journals online.) They are frequently misinterpreted, and subject to numerous criticisms. But what I really don't like about them is that they are expensive, and JCR enforces its copyright and doesn't allow lists of impact factors to be posted legally. So the only way my students can compare impacts factors of a series of journals is to look each one up individually, or find them illegally posted on scribd.

But there are LOTS of alternatives to JCR Impact Factors. My favorite is Eigenfactor. (Don't worry, it has nothing to do with eigenvectors). Their statistics seem more meaningful, you can compare different disciplines, they include cost effectiveness rankings, and they are completely free and searchable. But they also do a lot of great visualizations. You can easily look up any field and see which journals publish the most articles and which journals publish the most influential articles, how fields are related, changes over time, etc. Being able to see how journals relate to each other gives a much better understanding of scientific literature than one statistical indicator.

Journal Citation Reports (JCR) publishes the list of impact factors each year. These are the ones journals brag about, the ones that are listed in the journal (or on the "About this Journal" section, if you read journals online.) They are frequently misinterpreted, and subject to numerous criticisms. But what I really don't like about them is that they are expensive, and JCR enforces its copyright and doesn't allow lists of impact factors to be posted legally. So the only way my students can compare impacts factors of a series of journals is to look each one up individually, or find them illegally posted on scribd.

But there are LOTS of alternatives to JCR Impact Factors. My favorite is Eigenfactor. (Don't worry, it has nothing to do with eigenvectors). Their statistics seem more meaningful, you can compare different disciplines, they include cost effectiveness rankings, and they are completely free and searchable. But they also do a lot of great visualizations. You can easily look up any field and see which journals publish the most articles and which journals publish the most influential articles, how fields are related, changes over time, etc. Being able to see how journals relate to each other gives a much better understanding of scientific literature than one statistical indicator.

Subscribe to:

Posts (Atom)